Sixth post in a series on building business process automation at scale. Infrastructure. Automation. Where automation fails. Statistical validation. When models disagree. This time: what you actually do about it.

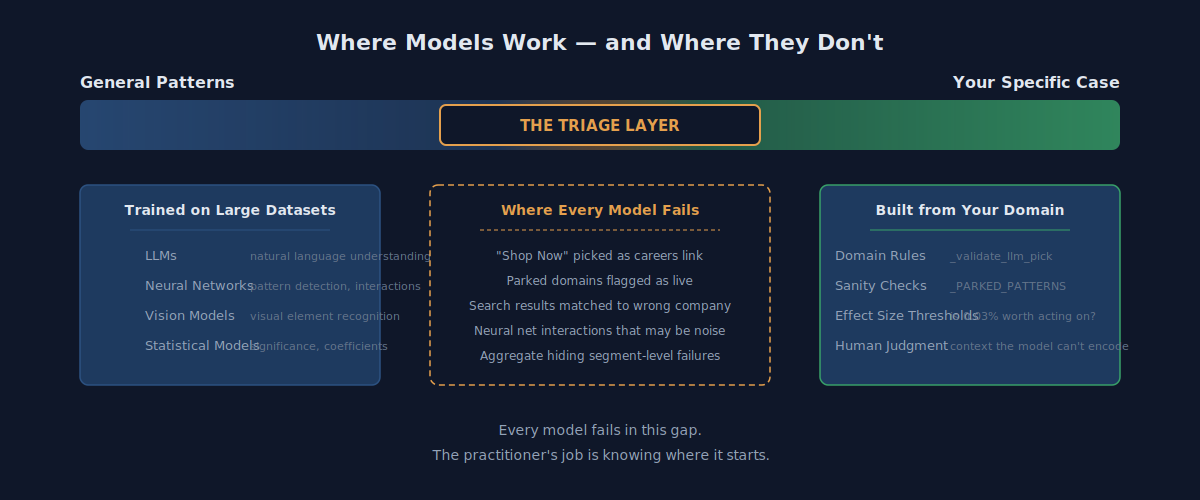

The short version: When two tools give you different answers from the same data, you don't pick the fancier one. You ask three questions: Is the simple answer good enough? Do the tools disagree on the answer or just how big the effect is? And what happens if you're wrong? The AI tools are trained on broad, general knowledge. Your business has specific quirks they've never seen. The gap between "general" and "yours" is where things break — and where experience matters most.

Last post I left it at "when models disagree, you need the human." That's true. It's also useless advice. Every vendor says "human in the loop." Nobody tells you what the human is supposed to do when they get there.

Here's what you actually do: triage. And the triage depends on understanding one thing that gets lost in the AI hype.

AI Knows the General Rules. It Doesn't Know Your Business.

Every AI tool — whether it's a statistical model, a neural network, or a chatbot — learned by studying massive amounts of data. An AI that scans websites learned what careers pages generally look like by seeing millions of them. A statistical model learned what generally predicts ad performance by analyzing hundreds of thousands of bids.

When your situation looks like what the AI studied, it works great. "Careers" in the top navigation? The AI nails it every time because that's what careers pages generally look like.

When your situation is different from the general case, the AI fails — but it doesn't tell you it's failing. It gives you an answer with the same confidence. A vision AI picks the "Shop Now" button as the careers page because it's the most prominent element on the screen. That's what "important buttons" generally look like. It's just not a careers link.

The gap between "what things generally look like" and "what your specific case looks like" is where every AI tool fails. That gap is always there. The question is how wide it is.

The hype says AI handles everything. The reality is AI handles the 80% that looks like the general case. The other 20% — the part that's specific to your business, your market, your edge cases — that's where you need something else.

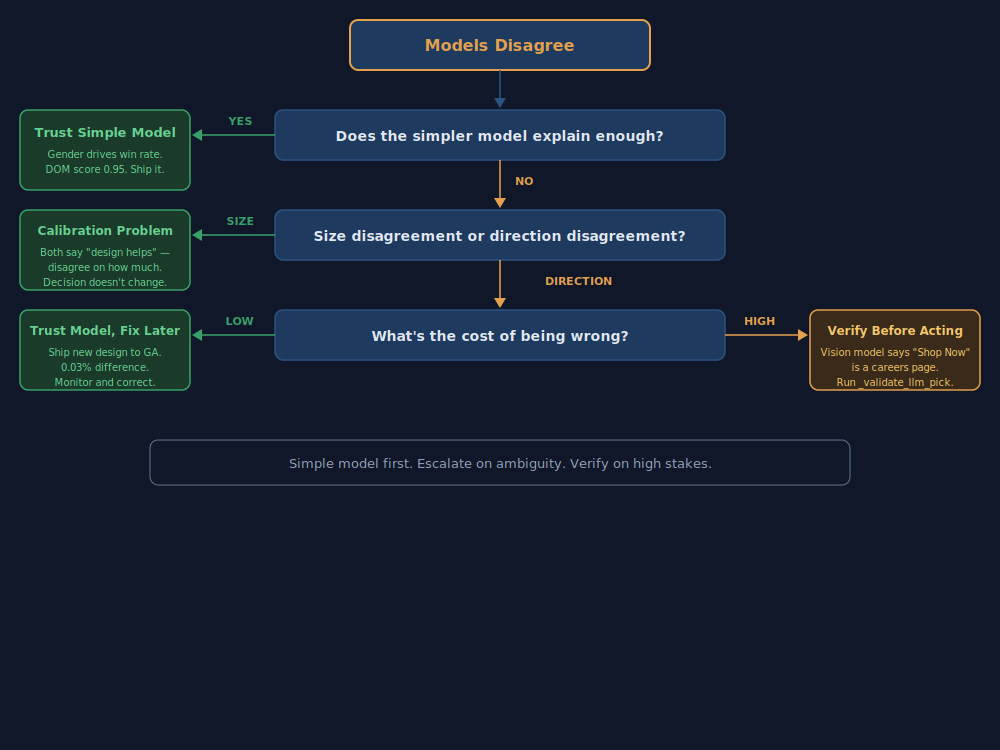

The Triage: Three Questions

When two tools give you different answers, ask these three questions in order.

1. Is the simple answer good enough?

Start with the straightforward tool. If it gives you a clear answer, use it. Don't go looking for complexity you don't need.

Example from ad tech: We asked "what predicts winning bids?" The straightforward statistical model said: gender. That's it. Women's bids win 30% of the time, men's 20%. The fancier neural network found additional patterns in specific states — but those effects were tiny, 1-2%. For a bidding strategy decision, the simple answer is enough. You don't need to rebuild your strategy for effects that small.

Example from business verification: Our basic scoring system looks at every link on a website and says "this one says 'Careers' in the main navigation — 95% confident that's the careers page." When the simple answer is that clear, you don't need to bring in the AI. Take the answer and move on.

The rule: if the simple answer is clear, use it. Only bring in the complex tools when the simple one can't figure it out.

2. Do they disagree on the answer, or just how big it is?

There's a huge difference between two tools saying "the new design helps, but we disagree on how much" versus "one says it helps and the other says it hurts."

The first one — disagreeing on how much — is fine. Your decision is the same either way. Ship the new design.

The second one — disagreeing on the direction — is a real problem. Your decision flips depending on which tool you trust.

Example from ad tech: Both our models agreed: the new ad design improves conversions in most markets. They disagreed on the underlying mechanism. That doesn't change the decision. Ship it selectively.

Example from business verification: The basic scoring system says "there's no careers page on this website." The AI vision tool says "I found one — it's this button." You look at the button. It says "Shop Now." The two tools aren't just disagreeing on magnitude — they're pointing in opposite directions. That's when you need a tiebreaker, and the tiebreaker is usually a simple sanity check: does this answer even make sense?

The rule: if they agree on the direction but disagree on the size, move forward. If they disagree on the direction, stop and verify.

3. What happens if you're wrong?

Some wrong answers are cheap. Some are expensive. The triage depends on which kind you're dealing with.

Cheap to be wrong: You ship the new ad design to males in Georgia and the old design was actually 0.03% better. You lost almost nothing. Trust the model, move fast, correct later.

Expensive to be wrong: The AI marks a shopping page as a careers page. A downstream process tries to scrape job listings from a product catalog. You've wasted time, polluted your data, and someone has to clean up the mess. Verify before acting.

Really expensive to be wrong: In supply chain, a misclassified part gets the wrong category code, which pulls it into the wrong purchasing strategy, which routes it to the wrong supplier, which affects production schedules across multiple plants. One bad data point at the top compounds through every decision below it.

The rule: low stakes → trust the model and correct if needed. High stakes → verify first.

What the AI Vendors Don't Sell

Every AI vendor sells the smart tool. The chatbot that understands natural language. The vision system that reads screenshots. The neural network that finds hidden patterns. All real capabilities.

Nobody sells the triage layer.

The triage layer is the unglamorous part. It's the checklist that catches when the AI picks "Shop Now" instead of "Careers." It's the business knowledge that says "a website returning a valid response doesn't mean it's a real business." It's the experience that tells you a 0.03% improvement isn't worth rebuilding your strategy for.

The triage layer is built from every mistake you've caught, every false positive you've corrected, every time the AI was confidently wrong and you figured out why. It doesn't come from the vendor. It comes from running the system and paying attention to where it breaks.

That's why it doesn't scale automatically — which is exactly why it's valuable. The AI tools are available to everyone. The triage layer is specific to your business. It's the competitive advantage that can't be bought off the shelf.

Where This Series Has Been Going

Six posts. One argument.

- The Sovereign Stack — own your infrastructure. Don't rent what you can build for less.

- Know Your Business — automate at scale. Process thousands of companies a day instead of dozens.

- The 80% Problem — automation works at 80%. That's the same ceiling every industry has hit for decades. Speed without accuracy is just making mistakes faster.

- When the Numbers Lie — averages hide problems. Break your results down by segment or you're flying blind.

- From Proving to Predicting — two tools, same data, different stories. The question isn't which tool is better — it's which answer do you trust.

- This post — here's how you decide. Simple answer first. Check the direction. Weigh the stakes. And build the triage layer that the vendors can't sell you.

The AI tools work. Within their lane. The lane is broad, general knowledge. Your business runs on specific knowledge. The practitioner's job is knowing where the lane ends — and building the bridge for everything past that point.

Next up: how you actually monitor where "general" meets "specific" in a live system. What breaks, how you catch it, and what the data looks like when your triage layer is working.

Get in touch if you're building the same kind of bridge.